4 Risks Supply Chain Leaders Can’t Ignore with Humanoid Robots

Humanoid robots are starting to show up in places where, until very recently, they were science fiction: walking warehouse aisles, handling totes, supporting lineside replenishment, even inspecting equipment. For CEOs and supply chain leaders under pressure to find labor, boost resilience, and modernize operations, the allure is obvious. These machines can, in theory, drop into spaces built for people and take on tasks that have become increasingly hard to staff.

The question isn’t whether humanoids will show up in real operations. It’s whether your organization is ready for what happens next.

For supply chain leaders, four issues matter most: (1) safety, (2) continuity and downtime risk, (3) data and intellectual property, and (4) governance. If you ignore any one of them, the robots walking your aisles can quietly become a source of avoidable risk.

Most organizations understandably begin by asking: does the technology work, and what’s the ROI? Those are important questions. But as soon as humanoid robots move from a pilot demo to regular use, they stop being an innovation project and start becoming something else: a safety‑critical, security‑sensitive, contract‑driven part of your business that insurers, partners, and employees will care about. In other words, they become business assets and sources of operational and legal risk, whether you’ve planned for that or not.

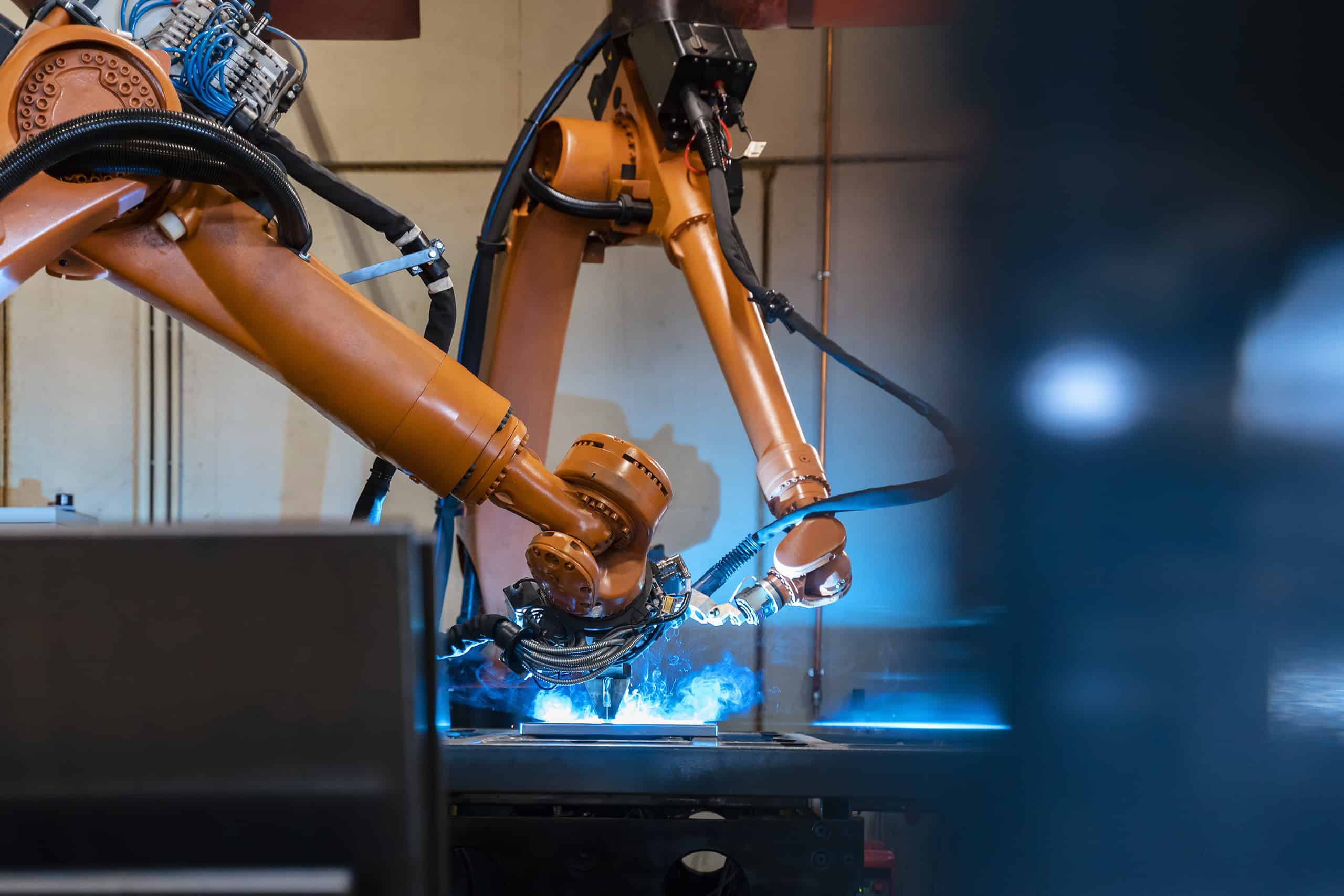

The hard part is that humanoids don’t fit neatly into existing boxes. Traditional industrial robots were bolted to the floor and fenced off. Collaborative robots brought people and machines closer together, but still in relatively defined areas. Humanoid robots combine mobility, manipulation, and perception in the same package. They walk through shared aisles, reach into racks, and operate next to people whose behavior is not always predictable. That makes them operationally powerful and unusually complex to manage from a safety, risk, and governance standpoint.

1. Safety: Robots as co-workers, not just machines

Safety is the first and most obvious example. In the United States, employers are required under OSHA to provide a workplace free from serious, recognized hazards. Historically, when regulators looked at robots, they were looking at fixed industrial arms in guarded cells. Humanoids walking around a live warehouse are a very different picture. They’re more like coworkers than machines in cages.

Once you see them that way, the practical questions for executives are simple and uncomfortable. If a humanoid robot bumps into a worker and causes an injury, who is accountable? The facility operator responsible for workplace safety? The manufacturer who designed the robot? The systems integrator who tuned its behavior and routes? All of them? And how is it different from two human workers bumping into each other? If you haven’t defined that in your contracts and policies, you’re likely to find out the hard way. The same goes for training and procedures. When you onboard humanoids, do your safety programs and handbooks actually cover human‑robot interaction in shared spaces, or are you relying on informal “common sense” at the site level? Regulators and, later, anyone investigating an incident will care which one it is.

2. Continuity and downtime risk: When “extra help” becomes critical infrastructure

Once you get past basic safety, scalability and resilience become the next pressure points. In the early stages, it’s easy to think of humanoid robots as “extra help.” In practice, once they’re deployed, they tend to get woven into critical workflows quickly. If a humanoid is responsible for feeding a line or clearing finished goods and it goes down, it’s not just the robot that stops. Downstream tasks pause, work‑in‑progress piles up, supervisors scramble to reassign people, and your ability to hit your shipping window or production schedule is suddenly at risk.

When that happens, the character of your risk changes. Robot downtime translates into missed SLAs, strained supplier relationships, customer penalties, and uncomfortable conversations with insurers. If your existing customer contracts and supply agreements were written with a fully human workforce in mind, they probably don’t address what happens when automation becomes a single point of failure. Nor do many current vendor contracts explicitly commit robot suppliers and integrators to uptime targets, response times, and escalation paths that reflect the real cost of downtime on your side. That’s not a technical problem. It’s a business, contractual, and risk‑management problem that executives need to think through and address early on.

3. Data and IP: Who owns the advantage you’re building?

Humanoid robots also generate an enormous amount of information: sensor feeds, video, logs, performance metrics, annotations, and more. They force you to design and refine workflows, orchestration logic, and “playbooks” for how humans and robots share tasks and space. Over time, those workflows, datasets, and tools become a big part of your competitive advantage. They’re what make your deployment better, faster, and safer than your competitors’.

That naturally raises a harder question: who actually owns that advantage? In many standard agreements, vendors retain broad rights over anything related to their systems. Without clear boundaries, you can end up in a situation where the robot manufacturer or integrator has the right to use data from your operations to train its models and improve its products—and then take those improvements straight to your competitors. Or you spend heavily to co‑develop integration tools and task libraries that you assume are “yours,” only to discover they sit entirely under the vendor’s control. If you ever try to switch providers, you find you can’t take your own integration logic and datasets with you.

None of those outcomes are inevitable, but avoiding them requires that data and IP questions be addressed upfront. Who owns “operational data” generated in your facilities? Under what conditions can your vendors use it, and for whose benefit? How do you distinguish their pre‑existing technology from the workflows, labels, and models you helped create? If robots get smarter because they have lived in your environment, do you get any rights to that intelligence? These aren’t abstract questions; they determine whether robot deployments build lasting advantage for your business or mostly for someone else’s.

4. Governance: Who actually owns the decisions?

All of these threads tie back to one final theme: governance. Early pilots often succeed because a small, motivated group of people makes things work through sheer force of will. But as you scale, regulators, insurers, and business partners stop caring about enthusiasm and start caring about structure. Who owns robot operations? Who approves changes in tasks and behaviors? How are incidents documented and learned from? Where do legal, safety, IT, and operations intersect in decision‑making? If you can’t answer those questions clearly, someone else will answer them for you after an incident or dispute.

For most organizations, getting this right means treating humanoids as a four‑part risk problem: safety, continuity, data and IP, and governance—not just as a technology bet.

None of this is an argument against humanoid robots. If anything, it’s the opposite: the companies that move early and thoughtfully will have a real edge. But “thoughtfully” in this context means bringing risk, safety, security, and operations expertise into the room on day one, not after the robots are already walking your aisles.

If an organization is exploring or piloting humanoid robots and its policies and assumptions still reflect a world of fenced‑in automation and human‑only workflows, now is the time to catch up.

This article originally appeared on Supply & Demand Chain Executive in April 2026.