From Static Compliance to Living Compliance: How Agentic AI Can Make Health Care Operations Safer

This piece was written in collaboration with Sam De Brouwer, co‑founder and CEO of XY.AI, and Lamara de Brouwer, co‑founder and CTO of XY.AI.

Executive Summary

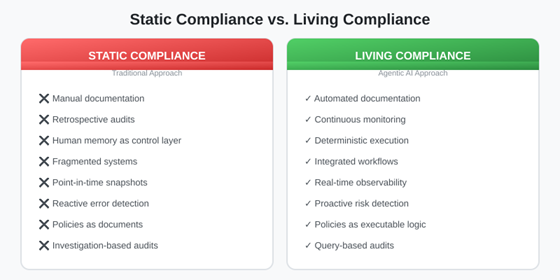

Health care compliance today is manual, retrospective, and brittle. Humans are expected to remember rules, document decisions, and reconstruct context months later during audits. The result is a system that doesn’t scale — one where patient safety, operational efficiency, and regulatory defensibility are perpetually at risk.

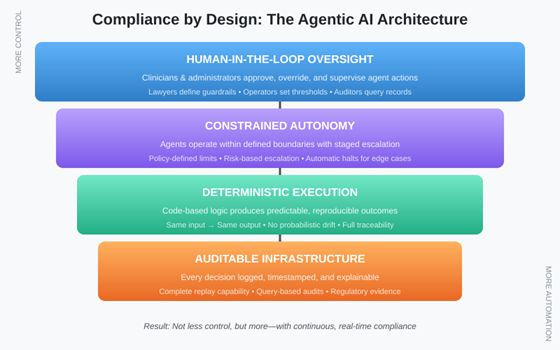

Agentic AI offers a fundamentally different approach. When designed with deterministic execution, constrained autonomy, and human-in-the-loop oversight, these systems enable continuous, auditable, real-time compliance. The result is not less control, but more.

This paper presents a joint legal and technical perspective on how health care organizations can transform compliance from a periodic burden into an always-on operational advantage — without venturing into clinical decision-making or creating new liability exposure.

1. The Compliance Reality Today

Walk into any health care organization, and you’ll find the same pattern: compliance lives in binders, spreadsheets, and the institutional memory of overworked staff. Regulatory requirements from HIPAA, CMS, state licensing boards, and payer contracts create a web of obligations that must be tracked, documented, and proven during audits that can occur months or years after the fact.

The fundamental problem is that human memory serves as the primary control layer. Staff must remember which forms require signatures, which authorizations need renewal, which coding guidelines changed last quarter, and which payer requires which documentation. When they forget — and they inevitably do — organizations face denied claims, audit findings, regulatory penalties, and in the worst cases, patient harm.

Current systems are fragmented by design. Electronic health records handle clinical documentation. Practice management systems handle billing. Separate platforms manage credentialing, contracting, and quality reporting. Each system maintains its own version of truth and reconciling them requires manual effort that rarely happens until an auditor demands it.

The result is retrospective compliance — organizations discover problems only when claims are denied, audits are scheduled, or regulators come calling. By then, the context that would explain decisions has evaporated, the staff who made those decisions may have moved on, and reconstruction becomes an expensive forensic exercise.

2. What Changes with Agentic AI?

Agentic AI represents a category shift from the chatbots and predictive analytics that have characterized health care’s AI adoption to date. Where traditional AI systems respond to queries or flag patterns, agentic systems act: they pursue goals, execute workflows, and interact with other systems — all within defined boundaries.

The distinction matters for compliance. A chatbot can tell a biller that a claim might be denied. An agentic system can validate that claim against payer requirements before submission, flag specific deficiencies, gather missing documentation, and either route for human review or proceed based on pre-defined rules. The compliance check becomes embedded in the workflow rather than layered on top of it.

This is what we mean by “compliance by design.” Instead of writing policies that humans must remember to follow, organizations encode those policies into executable logic that agents enforce automatically. The question shifts from “Did staff follow the policy?” to “Is the system configured correctly?” — a question that can be answered definitively and audited systematically.

Critically, effective agentic AI for compliance requires three architectural commitments: deterministic execution (the same inputs produce the same outputs), constrained autonomy (agents operate only within defined boundaries), and human-in-the-loop oversight (humans retain authority over consequential decisions). Without these, organizations simply trade one set of risks for another.

3. Safety, Accuracy, and Accountability

Health care leaders approaching agentic AI consistently raise three questions: What happens when the AI is wrong? Who is accountable? Can we explain this in an audit? These questions deserve serious answers, not dismissive assurances.

Deterministic vs. Probabilistic Systems

Large language models generate responses probabilistically — the same prompt can produce different outputs. This creates obvious problems for compliance, where consistency and predictability are paramount. Deterministic agentic systems address this by separating natural language understanding (which may use probabilistic models) from execution logic (which follows defined rules). The language model interprets the request; the execution engine performs the action. This architecture makes behavior predictable and testable.

Human-in-the-Loop Governance

Staged autonomy addresses the accountability question. For low-risk, high-volume tasks (verifying that a form is signed), agents can act autonomously. For higher-stakes decisions (submitting a complex claim, escalating a denial), agents surface recommendations for human approval. The threshold for autonomy becomes a policy decision that organizations can calibrate based on their risk tolerance and regulatory requirements. Humans remain in control; agents handle the mechanical burden.

Explainability and Replay

For audit defensibility, every agent action must be logged with sufficient context to reconstruct why it happened. This means capturing not just the action and outcome, but the inputs that triggered it, the rules that applied, and the human authorizations in effect. When an auditor asks, “Why was this claim submitted this way,” the organization should be able to replay the exact decision sequence rather than relying on someone’s recollection.

4. From Policies to Systems

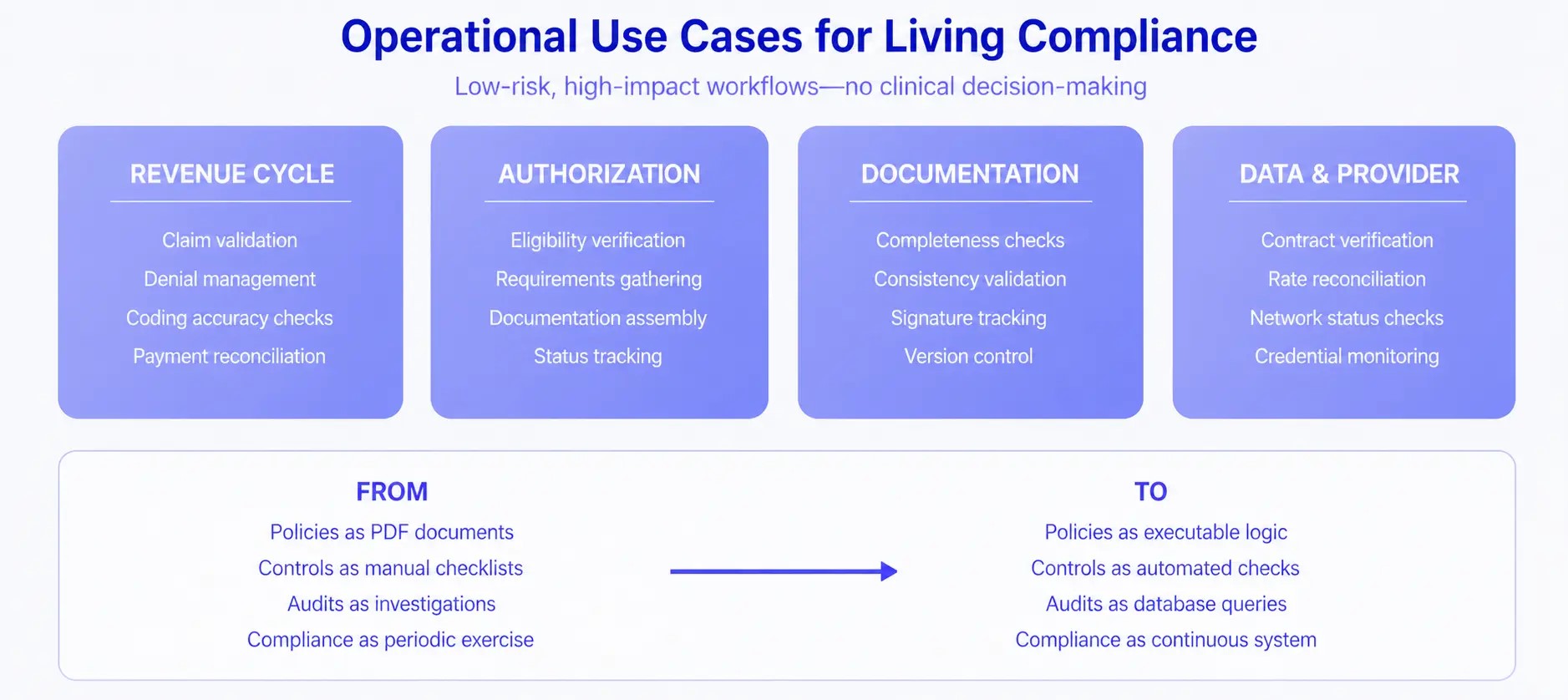

The most profound shift that agentic AI enables is the transformation of compliance from documentation to infrastructure. This section explains what that transformation looks like in practice.

Policies become executable logic. Consider a payer contract that requires prior authorization for certain procedures. In traditional compliance, this policy exists as a document that staff must remember to consult. In living compliance, the policy is encoded as a rule that the system evaluates automatically: when a procedure code matches the authorization requirement, the system initiates the authorization workflow before scheduling can proceed. The policy enforcement is guaranteed, not hoped for.

Controls become automated checks. Manual compliance checklists — Did the patient sign the consent? Is the provider credentialed for this service? Does the documentation support the code? — become automated validations that run continuously. Deviations trigger alerts or blocks in real time rather than showing up in quarterly audits.

Audits become queries. When compliance state is maintained systematically, audit response transforms from investigation to reporting. “Show me all claims submitted without required authorization” becomes a database query that returns in seconds, not a weeks-long document review. The organization’s compliance posture becomes observable at any moment, not just during audit preparation.

5. Practical Use Cases

The principles above apply most naturally to operational workflows that are high-volume, rule-governed, and administratively burdensome — but not clinically sensitive. By focusing on operations rather than clinical decision-making, health care organizations can capture significant value while maintaining low risk and high adoption.

Revenue cycle workflows offer immediate opportunities. Agents can validate claims against payer requirements before submission, identify coding inconsistencies, manage denials by assembling required documentation automatically, and reconcile payments against expected reimbursement. Each of these tasks follows defined rules that can be encoded and executed systematically.

Prior authorization is perhaps the highest-impact application. The current prior authorization process is universally despised: providers spend hours gathering requirements, submitting requests, and tracking status across multiple payer portals. Agentic systems can verify eligibility, identify authorization requirements, assemble documentation from clinical records, submit requests, and monitor status — all while maintaining complete audit trails of every action taken.

Documentation integrity benefits from continuous monitoring. Agents can verify that required signatures are present, that documentation supports billed services, that all mandatory fields are completed, and that records maintain consistency across systems. Problems surface immediately rather than during retrospective audits.

Payer-provider data alignment addresses a chronic source of compliance failures. Agents can continuously verify that contracted rates match claim payments, that provider credentials remain current with all payers, and that network status is accurate across all platforms. Discrepancies trigger immediate investigation rather than accumulating until they become material.

The regulatory instinct when facing new technology is often to restrict until proven safe. With agentic AI in health care operations, this instinct may be counterproductive. Organizations using well-designed agentic systems will likely demonstrate better compliance than those relying on traditional manual processes. They’ll have more complete documentation, fewer errors, and faster response to requirements changes. Regulators should encourage the adoption of auditable systems by accepting system-generated compliance evidence and providing clear guidance on what constitutes acceptable automation in different contexts.

For Health Care Operators

Start with low-risk, high-burden workflows where the compliance rules are clear and the consequences of errors are financial rather than clinical. Revenue cycle and prior authorization are natural starting points. Build internal expertise by piloting with specific payers or service lines before expanding. Invest in change management: staff need to understand that agentic systems augment their capabilities rather than threaten their roles. Most importantly, insist on auditability — any system that cannot explain its actions is creating compliance risk rather than reducing it.

For Technology Builders

The temptation in AI development is to maximize capability. In health care compliance, the imperative is to maximize trustworthiness. This means separating language understanding from execution logic, maintaining deterministic behavior for all compliance-critical functions, building comprehensive audit trails, and designing for staged autonomy that keeps humans in control of consequential decisions. Token-heavy black-box approaches may be technically impressive but are fundamentally unsuitable for environments where explainability and consistency are requirements, not preferences.

Conclusion

Health care compliance doesn’t have to be a periodic scramble driven by audit calendars and institutional anxiety. Agentic AI, when designed with appropriate constraints and controls, can transform compliance into continuous, observable, and reliable infrastructure—reducing administrative burden, improving accuracy, and creating defensible records that serve organizations well when regulators come calling.

The technology is ready. The regulatory environment is receptive. The operational pain is acute. What remains is for health care leaders, technology builders, and legal advisors to work together in designing implementations that capture the benefits while managing the risks. This paper represents our commitment to that collaboration.

Compliance stops being a document. It becomes a system.

About the Authors

Natasha Allen is a partner at Foley & Lardner LLP, and chairs its AI sector, specializing in health care regulatory compliance and operational risk management. She works with health systems, physician groups, and health care technology companies on compliance program design and regulatory strategy.

Sam de Brouwer is co-founder and CEO of XY.AI (XYCorp Ltd), building agentic AI infrastructure for health care operations. Her work focuses on deterministic execution architectures while continuously learning that enable enterprise-grade automation with full auditability.

Lamara de Brouwer is co-founder and CTO of XY.AI (XYCorp Ltd), where he leads engineering. He brings expertise in translating operational complexity into systematic, auditable processes.

Louis Lehot is a partner at Foley & Lardner LLP, where he advises companies at the intersection of health care and technology on formation, financing, scaling, governance and exit planning. He has counseled numerous frontier-tech organizations on AI implementation strategies and regulatory frameworks.