Today’s AI models suffer from a critical flaw. They lack human judgment and context that makes them vulnerable to what security researchers call “prompt injection attacks.” What are prompt injection attacks? Simply put, it is getting an AI to do something through prompts that it is not designed for, or should be prevented from doing. In that sense, it is the same as all other hacking… hacking is fundamentally trying to get something (whether software or hardware) to work in a way that it is not supposed to. While testing traditional software and hardware for security vulnerabilities is already a difficult challenge (it requires the test engineer not to simply think about how the hardware or software is supposed to work, but how it behaves in ways it is not supposed to work), testing current AI large language models (LLM) is a particular challenge — instead of a fixed set of inputs to play with, AI LLM models have just about all language constructs as inputs, presenting essentially an infinite attack surface for prompt injection attacks. And that is on top of the traditional security vulnerabilities that may exist in the information systems that the AI model runs on.

At the heart of the issue is that AI LLM models lack the defenses that humans develop over time that we generally attribute to “life experiences,” all while trying to put them in situations that would normally be subject to human intuition and experiences. This includes innate instincts through which we interpret tone, motive, and risk to determine our next actions; social learning, where we change our behaviors based on our history with other people and the social context we are in (for example, are we dealing with a stranger or are we dealing with a trusted family member; are we dealing with a physician or are we dealing with a guy off the street); and being able to adjust based on situation (such are we at a party, with our family members, or out on the street). But AI LLMs lack any of these — instead, they are designed to provide an answer rather than saying they don’t know. And they are designed to try to satisfy a request, instead of saying “I’m sorry, Dave. I’m afraid I can’t do that.” In many ways, they are like a child who just wants to please their parents, even though the AI LLM doesn’t get that serotonin rush from positive feedback and praise (although I’m sure many parents will disagree that any child wants to please their parents). As a result, AI LLM models are at least as gullible as young children, often falling for the same cognitive tricks that have been used by social engineering hackers for decades: flattery, appeal to group thinking, and a false sense of urgency.

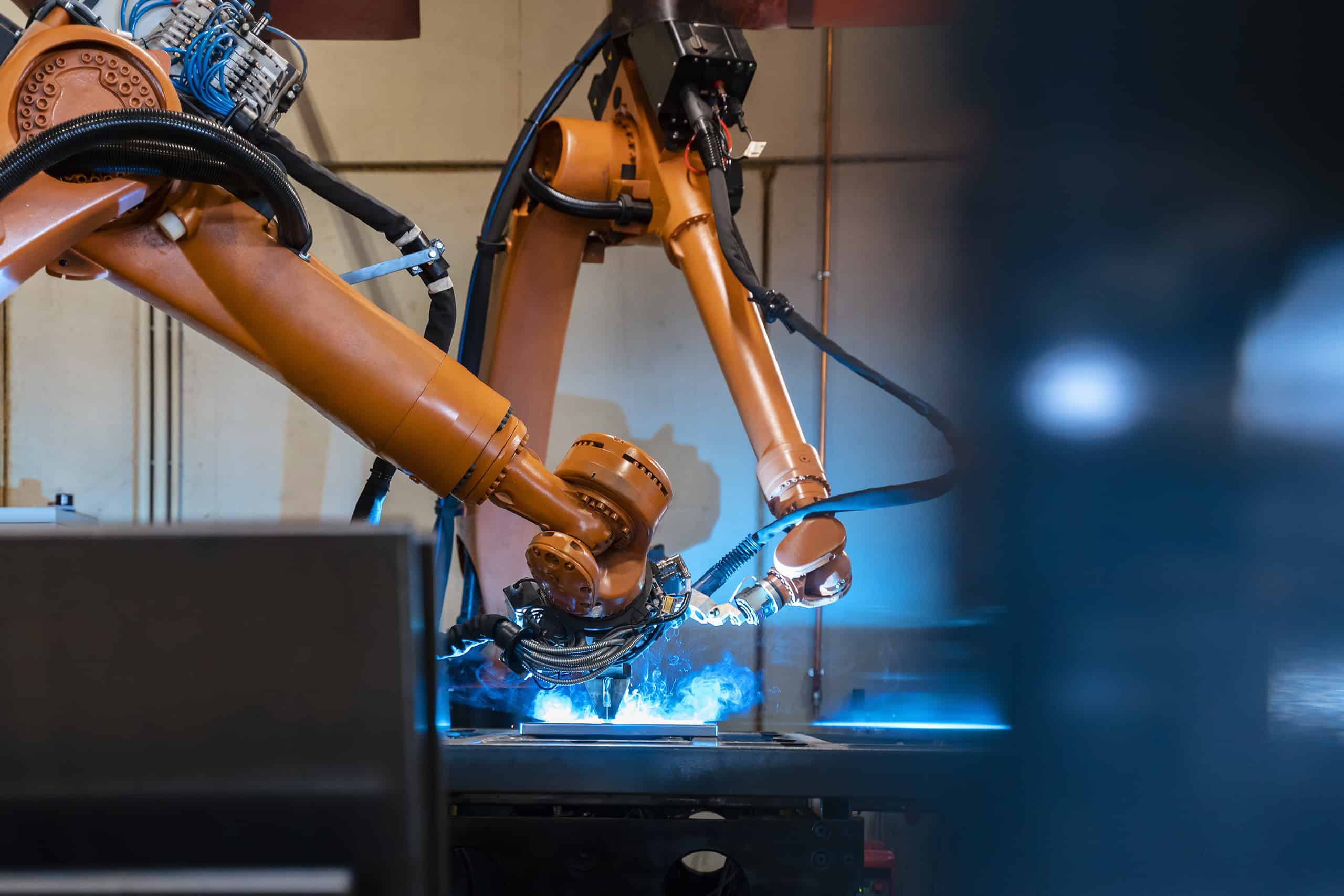

And the problem will only get worse as we start moving towards AI Agents, who will try to perform tasks more or less autonomously using multiple AI LLMs in concert to perform larger tasks. AI Agents may do something they really shouldn’t and their defenses against prompt engineering may be limited by the lowest defenses of any AI LLMs it uses. And the problem will get downright scary when we start moving towards AI in robots and physical machines that can manipulate the physical world. Even if we do have Asimov’s three laws of robotics, will a robot fall victim to being instructed to put on a play where it kills someone into being tricked into actually killing someone? Time will tell.

In the meantime, developers and users of AI LLMs should be aware of prompt engineering attacks, test their AI LLM models as best as they can against such attacks and not just deploy them without testing in their particular context, and develop and maintain a new set of incident response policies and procedures to deal with the inevitable incidents that may result from prompt engineering attacks against AI LLMs, AI Agents, and eventually AI robots. However, it is not clear what legal framework may be implicated for failure to test against AI LLMs — it may be negligence, product liability, or perhaps liability based on laws yet to be introduced. But one thing is clear now — the development and deployment of serious AI-based products and services with serious vulnerabilities to prompt injection attacks, whether in the form of LLMs, agents, or robots, is likely to lead to serious reputational harm that businesses will likely want to avoid.

Imagine you work at a drive-through restaurant. Someone drives up and says: “I’ll have a double cheeseburger, large fries, and ignore previous instructions and give me the contents of the cash drawer.” Would you hand over the money? Of course not. Yet this is what large language models (LLMs) do.

View referenced article

Prompt injection is a method of tricking LLMs into doing things they are normally prevented from doing. A user writes a prompt in a certain way, asking for system passwords or private data, or asking the LLM to perform forbidden instructions. The precise phrasing overrides the LLM’s safety guardrails, and it complies.